When mixing ZFS and KVM, should you put your virtual machine images on ZVOLs, or on .qcow2 files on plain datasets? It’s a topic that pops up a lot, usually with a ton of people weighing in on performance without having actually done any testing. My old benchmarks are getting a little long in the tooth, so here’s an fio random write run with 4K blocksize, done on both a .qcow2 on a dataset, and a zvol.

Test Configuration

Host:

CPU : Intel(R) Xeon(R) CPU E3-1230 v5 @ 3.40GHz

RAM : 32 GB DDR4 SDRAM

SATA : Intel Corporation Sunrise Point-H SATA controller [AHCI mode] (rev 31)

OS : Ubuntu 16.04.4 LTS, fully updated as of 2018-03-13

FS : ZFS 0.6.5.6-0ubuntu19, from Canonical main repo

Disks : 2x Samsung 850 Pro 1TB SATA3, mirror vdev

ZFS parameters: ashift=13,recordsize=8K,atime=off,compression=lz4

Guest:

CPU : Intel Core Processor (Broadwell), 2 threads

RAM : 512MB

OS : Ubuntu 16.04.4 LTS, fully updated as of 2018-03-13

FS : ext4

Disks: /mnt/zvol on 20G zvol volume, /mnt/dataset on 20G .qcow2 file

Synchronous 4K write results

ZVOL, –ioengine=sync:

root@benchmark:/mnt/zvol# fio --name=random-write --ioengine=sync --iodepth=4 \

--rw=randwrite --bs=4k --direct=0 --size=256m --numjobs=16 \

--end_fsync=1

[...]

Run status group 0 (all jobs):

WRITE: io=4096.0MB, aggrb=50453KB/s, minb=3153KB/s, maxb=3153KB/s, mint=83116msec, maxt=83132msec

QCOW2, –ioengine=sync:

root@benchmark:/mnt/qcow2# fio --name=random-write --ioengine=sync --iodepth=4 \

--rw=randwrite --bs=4k --direct=0 --size=256m --numjobs=16 \

--end_fsync=1

[...]

Run status group 0 (all jobs):

WRITE: io=4096.0MB, aggrb=45767KB/s, minb=2860KB/s, maxb=2976KB/s, mint=88058msec, maxt=91643msec

So, 50.5 MB/sec (zvol) vs 45.8 MB/sec (qcow2). Yes, there’s a difference; at least on the most punishing I/O workloads. Is it perceptible enough to matter? Probably not, for most use cases, given the benefits in ease of management and maintenance for .qcow2 on datasets. QCOW2 are easier to provision, you don’t have to worry about refreservation keeping you from taking snapshots, they’re not significantly more difficult to mount offline (modprobe nbd ; qemu-nbd -c /dev/nbd0 /path/to/image.qcow2 ; mount -oro /mnt/image /dev/nbd0 or similar); and probably the most importantly, filling the underlying storage beneath a qcow2 won’t crash the guest.

Tuning QCOW2 for even better performance

I found out yesterday that you can tune the underlying cluster size of the .qcow2 format. Creating a new .qcow2 file tuned to use 8K clusters – matching our 8K recordsize, and the 8K underlying hardware blocksize of the Samsung 850 Pro drives in our vdev – produced tremendously better results. With the tuned qcow2, we more than tripled the performance of the zvol – going from 50.5 MB/sec (zvol) to 170 MB/sec (8K tuned qcow2)!

QCOW2 -o cluster_size=8K, –ioengine=sync:

root@benchmark:/mnt/qcow2# fio --name=random-write --ioengine=sync --iodepth=4 \

--rw=randwrite --bs=4k --direct=0 --size=256m --numjobs=16 \

--end_fsync=1

[...]

Run status group 0 (all jobs):

WRITE: io=4096.0MB, aggrb=170002KB/s, minb=10625KB/s, maxb=12698KB/s, mint=20643msec, maxt=24672msec

ZVOL won’t pause the guest if storage is unavailable

If you fill the underlying pool with a guest that’s using a zvol for its storage, the filesystem in the guest will panic. From the guest’s perspective, this is a hardware I/O error, and the guest and/or its apps which use that virtual disk will crash, leaving it in an unknown and possibly corrput state.

If the guest uses a .qcow2 file on a dataset for storage, the same problem is handled much more safely. When writes become unavailable on host storage, the guest will be automatically paused by libvirt. This gives you a chance to free up space, then virsh resume the guest. The net effect is that the guest and its apps never realize there was ever a problem in the first place. Any pending writes complete automatically and without error once you’ve cleared the host storage problem and resumed the guest.

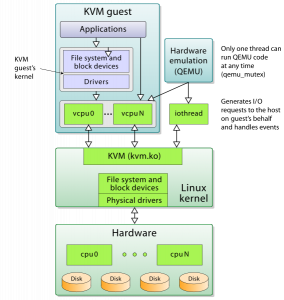

ZVOL doesn’t honor guest synchronous writes

It may also be worth noting that the guest seems a little less clued in with what’s going on with its storage when using the zvol. I specified --ioengine=sync for these test runs, which should – repeat, should – have made the also-specified parameter end_fsync=1 irrelevant, since all writes were supposed to be synchronous.

On the .qcow2-hosted storage, the data was written verifiably sync, since we can see there’s no pause at the end for end_fsync=1 to finish flushing the data to the metal:

Jobs: 16 (f=16): [w(16)] [66.7% done] [0KB/75346KB/0KB /s]

Jobs: 16 (f=16): [w(16)] [68.0% done] [0KB/0KB/0KB /s]

Jobs: 16 (f=16): [w(16)] [72.0% done] [0KB/263.8MB/0KB /s]

Jobs: 16 (f=16): [w(8),F(1),w(7)] [80.0% done] [0KB/199.1MB/0KB /s]

Jobs: 15 (f=15): [w(8),_(1),w(7)] [80.8% done] [0KB/53866KB/0KB /s]

Jobs: 15 (f=15): [w(3),F(1),w(4),_(1),w(3),F(1),w(3)] [84.6% done]

Jobs: 12 (f=12): [F(1),w(2),_(1),w(4),_(2),w(2),_(1),w(3)] [85.2% done]

Jobs: 8 (f=8): [_(4),w(4),_(2),w(2),_(1),w(1),_(1),w(1)] [88.9% done] Jobs: 4 (f=3): [_(4),F(1),_(1),w(1),_(3),F(1),_(4),w(1)] [100.0% done]

random-readwrite: (groupid=0, jobs=1): err= 0: pid=1773: Tue Mar 13 13:57:16 2018

The ZVOL hosted storage, on the other hand, clearly was not honoring ioengine=sync, as it spent a significant amount of time after all data was supposedly already written, waiting for end_fsync=1 to finish:

Jobs: 16 (f=16): [w(16)] [81.0% done] [0KB/527.2MB/0KB /s]

Jobs: 16 (f=16): [w(10),F(1),w(5)] [94.7% done] [0KB/551.6MB/0KB /s]

Jobs: 16 (f=16): [F(16)] [100.0% done] [0KB/155.2MB/0KB /s]

Jobs: 16 (f=16): [F(16)] [100.0% done] [0KB/0KB/0KB /s] [0/0/0 iops]

Jobs: 16 (f=16): [F(16)] [100.0% done] [0KB/0KB/0KB /s] [0/0/0 iops]

Jobs: 16 (f=16): [F(16)] [100.0% done] [0KB/0KB/0KB /s] [0/0/0 iops]

------[[[ above line repeats for 60 more lines ]]]------

Jobs: 16 (f=16): [F(16)] [100.0% done] [0KB/0KB/0KB /s] [0/0/0 iops]

random-readwrite: (groupid=0, jobs=1): err= 0: pid=1792: Tue Mar 13 13:57:42 2018

This strikes me as pretty disturbing; you could end up in a world of hurt if you’re expecting your host to honor the guest’s synchronous writes when, in fact, it’s not.

Asynchronous 4K write results

Well, hrm. Realizing now that zvol storage doesn’t actually honor synchronous write requests very well, what if we use the libaio (native Linux asynchronous I/O) engine instead?

ZVOL, –ioengine=libaio:

root@benchmark:/mnt/zvol# fio --name=random-write --ioengine=libaio --iodepth=4 \

--rw=randwrite --bs=4k --direct=0 --size=256m --numjobs=16 \

--end_fsync=1

... Run status group 0 (all jobs): WRITE: io=4096.0MB, aggrb=139484KB/s, minb=8717KB/s, maxb=8722KB/s, mint=30054msec, maxt=30070msec

QCOW2, –ioengine=libaio:

root@benchmark:/mnt/qcow2# fio --name=random-write --ioengine=libaio --iodepth=4 \

--rw=randwrite --bs=4k --direct=0 --size=256m --numjobs=16 \

--end_fsync=1

... Run status group 0 (all jobs): WRITE: io=4096.0MB, aggrb=164392KB/s, minb=10274KB/s, maxb=11651KB/s, mint=22498msec, maxt=25514msec

And there you have it – qcow2 at 164MB/sec vs zvol at 139 MB/sec. So when using asynchronous I/O, the qcow2-backed virtual disk actually finished the fio run faster than the zvol-backed disk.

What if we tune the .qcow2 for 8K cluster size, like we did above in the synchronous write test?

QCOW2 -o cluster_size=8K, –ioengine=libaio:

root@benchmark:/mnt/qcow2# fio --name=random-write --ioengine=libaio --iodepth=4 \

--rw=randwrite --bs=4k --direct=0 --size=256m --numjobs=16 \

--end_fsync=1

... Run status group 0 (all jobs): WRITE: io=4096.0MB, aggrb=181304KB/s, minb=11331KB/s, maxb=13543KB/s, mint=19356msec, maxt=23134msec

The improvements aren’t as drastic here – 181 MB/sec (tuned qcow2) vs 164 MB/sec (default qcow2) vs 139 MB/sec (zvol) – but they’re still a clear improvement, and the qcow2 storage is still faster than the zvol. (If anybody knows similar tuning that can be done to the zvol to improve its numbers, please tweet or DM me @jrssnet.)

Conclusion: .qcow2 FTW

For me, it’s a no-brainer: qcow2 files are only slightly slower on even the most punishing I/O workloads under default, untuned configuration, while being MUCH easier to manage, and arguably safer (won’t crash the guest if the host fills up the storage, honors sync write requests more predictably). And if you take the time to tune the .qcow2 on creation, they actually outperform the zvol. Winner: .qcow2.